Should we do, or not-do? Russell Ackoff, over many years, wrote about (negative) potential consequences:

There are two possible types of decision-making mistakes, which are not equally easy to identify.

- (1) Errors of commission: doing something that should not have been done.

- (2) Errors of omission: not doing something that should have been done.

For example, acquiring a company that reduces a corporation’s overall performance is an error of commission, as is coming out with a product that fails to break even. Failure to acquire a company that could have been acquired and that would have increased the value of the corporation or failure to introduce a product that would have been very profitable is an error of omission [Ackoff 1994, pp. 3-4].

Ackoff has always been great with turns of phrases such as these. Some deeper reading evokes three ideas that may be worth further exploration:

- 1. Doing or not-doing may or may not invoke learning.

- 2. Doing or not-doing invokes implicit orientations on time.

- 3. Doing or not-doing raises question of (i) changes via systems of willful action, and/or (ii) changes via systems of non-intrusive action.

These three ideas, explored in sections below, lead us from the management of human affairs, beyond questions of science, and into question of philosophy.

For those interested in the history of philosophy and science, the three ideas above are followed by an extra section:

- Appendix. Doing or not-doing in management can be placed philosophically in American pragmatism.

The question of doing or not-doing has been deep in the intellectual traditions of American management thinking in the latter 20th century. The attitude of Bias for Action espoused by Tom Peters first published in 1982 exhorts managers to do. Peters describes the shifts of 1962 “Bias of planning”, to 1982 “Bias for action” in a report card from 2001, and observes in a 2018 interview that it’s become the first of eight commandments in Silicon Valley.

1. Doing or not-doing may or may not invoke learning

One way of framing doing and not-doing is around decision-making mistakes. In 1994, Ackoff was advocating strongly for organizational learning. He criticized executives who suppress the surfacing of prior errors that might preclude the recurrence of mistakes.

There is a company for which I have been conducting research for more than thirty years. Twice in the last few months I was informed about the outcome of research, just completed by company personnel, that I had conducted many years earlier with the same results. None of those who were involved in the recent research, including the managers who asked for it, had been in the company when I had done my work. What had been learned years ago through my work had been lost. The organization had no memory of it. Without memory, learning cannot be retained. Learning that cannot be retained is a contradiction. Therefore, memory is necessary for learning.

Memory, however, is only necessary, not sufficient for organizational learning. Without the ability to identify and correct mistakes, learning how to improve decision making does not take place. When one does something right, one only confirms what is already known: how to do it. A mistake is an indicator of a gap in one’s knowledge. Learning takes place when a mistake is identified, its producers are identified, and it is corrected.

Unfortunately, most organizations are “programmed” to conceal mistakes from those who make them. The higher the rank of decision makers, the less likely they are to be made aware of the mistakes they make. Corporate executives try to create the impression that they are infallible. When a major decision-related mistake in a corporation is identified, it is usually impossible to find out who is responsible for it. As a result, learning does not take place. Those who make a mistake and are aware of it try to conceal the fact from others [Ackoff 1994, pp. 3].

Historically-oriented accounting records do not, unfortunately, fully capture errors.

Accounting systems are able to identify errors of commission, even though they often fail to do so. For example, most large capital expenditures are made only after a study has been conducted which produces an estimated return on the investment. Most accounting systems are capable of subsequently determining retrospectively whether or not this estimate was correct.

Errors of omission are horses of a different color. Decisions not to do something are seldom made a matter of record. Therefore, it is at best very difficult to become aware subsequently of the fact that a mistake was made, let alone who made it. In many companies I have tried in vain to find out who failed to buy another company that, with hindsight, clearly should have been bought; or who in a particular railroad decided not to convert its locomotives to diesel when it became available; or who in a brewing company failed to come out with a light beer, although it was suggested, until after a competitor did so with great success.

Because errors of commission are easier to identify than errors of omission, many decision makers try to avoid making errors of commission by doing nothing. Although this increases their chances of making an error of omission, these errors are harder to detect [Ackoff 1994, pp. 4].

Looking forward, managers have the authority and responsibility for organizational decisions to do or not-do, and will later be judged on results and consequences.

A manager’s job includes getting some things done and preventing others from being done. These effects are the intended outcomes of decisions. Decisions convert information, knowledge, and understanding into instructions for what should or should not be done. Information, knowledge, and understanding can be learned. The improvement of decision making with time or repetition is learning. In a changing environment such as we are currently experiencing, decision making deteriorates without learning. This increases the importance of identifying and correcting mistakes, without which learning cannot take place.

One thing that can be done to increase the likelihood of detecting mistakes and correcting them is to produce a “Decision Record” every time a decision of any importance is made, including decisions to do nothing [Ackoff 1994, pp. 4].

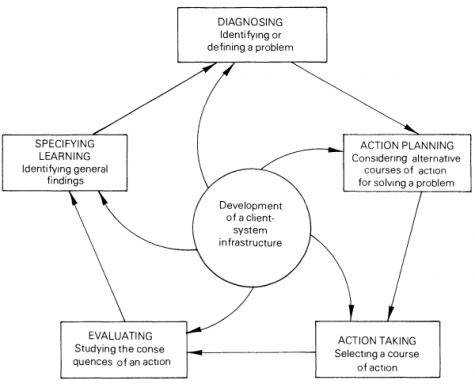

Such a decision record is indicated when an action research approach is heeded. The cycle is depicted in a variety of ways. While some (include originator Kurt Lewin) draw 4 steps, I like a 5-phase cycle by Susman and Evered 1978.

Rapoport’s (1970: 499) definition of action research is, perhaps, the most frequently quoted in contemporary literature on the subject:

Action research aims to contribute both to the practical concerns of people in an immediate problematic situation and to the goals of social science by joint collaboration within a mutually acceptable ethical framework.

To the aims of contributing to the practical concerns of people and to the goals of social science, we add a third aim, to develop the self-help competencies of people facing problems. [….]

While Rapoport’s definition of action research focuses on aim, action research can also be viewed as a cyclical process with five phases: diagnosing, action planning, action taking, evaluating, and specifying learning. The infrastructure within the client system and the action researcher maintain and regulate some or all of these five phases jointly (Figure) [Susman and Evered 1978, p. 588].

The 1994 article on “It’s a Mistake” is relatively consistent with a later 2006 article “Why Few Organizations Adopt Systems Thinking” [Ackoff 2006]. Sjon van ’t Hof has reviewed that article with a concept map.

If organizations are not of the learning type, they don’t care much about errors of omission. As a result managers don’t mind either. Instead they will make every effort to avoid errors of commission [van ‘t Hof 2015].

Collectively, we should welcome errors as an opportunity to learn. If an individual has made an error, it requires someone else to inform him or her of that. Organizationally, an error as passive ignorance (i.e. we didn’t know, thanks for pointing that out!) should not become an error as active ignorance ( i.e. a denial or taboo, sorry, we won’t discuss that) [Ing, Takala and Simmonds 2003].

2. Doing or not-doing invokes implicit orientations on time

If we go back to Ackoff’s 1981 writing, his chapter “Our Changing Concept of Planning” speaks to errors of commission and errors of omission in a different context. The interested reader can search on the four orientations, that are summarized here briefly.

A Typology of Planning

The concept of planning developed here is best understood by contrasting it with the alternatives concepts that currently prevail. The essential differences between these concepts derive from their temporal orientations.

- The domination of some orientation is the past, reactive;

- others to the present, inactive; and

- still others to future, preactive (see Table 3-1).

Table 3.1 The Four Basic Orientations to Planning Orientation Past Present Future Reactive + – – Inactive – + – Preactive – – + Interactive +/- +/- +/- + – favourable attitude; – = unfavourable attitude

- A fourth orientation, interactive, the one developed here, regards the past, present and future as different but inseparable aspects of the mess to be planned for; it focuses on all three equally.

It is based on the belief that unless all three temporal aspects of a mess are taken into account, development will be obstructed [Ackoff 1981, pp. 52-53, editorial paragraphing added].

[….]

- Recall that inactivists are willing to settle for doing well enough, to satisfice;

- preactivists want to do as well as possible, to optimize.

- Interactivists want to better in the future than the best we are capable of doing now, to idealize.

- Therefore, interactivists focus on improving performance over time rather than on how well they can do at a particular time under particular conditions. Their objective is to maximize their ability to learn and adapt, to develop [Ackoff 1981, p. 63, editorial paragraphing added].

In the categorization of doing and not-doing, idealizing towards “better in the future”, underscores a bias for action. Ackoff clearly declared his predisposition.

My presentation of the four orientations to planning has, of course, been biased. I am a proponent of the best interactive approach and advocate it because I believe it provdies the best chance we have for coping effectively with accelerating change, increasing organizational complexity, and environmental turbulence. Moreover, it is the only one of the four orientations that explicitly addresses itself to increasing individual, organizational, and societal development and improving quality of life [Ackoff 1981, p. 65].

Interactivists were prescribed the techniques of interactive planning and interactive design. After his retirement as a professor in 1986, Ackoff chose to emphasise his ideas of Interactive Planning and Idealized Design. He was named as chairman of the board of Interact: The Institute for Interactive Management (at interactdesign.com in 1998), and of Interact: The Center for Interactive Systems Design (at interactdesign.com in 2013, after Ackoff’s passing in 2009).

I’ll leave those interesting in Interactive Planning to read the book. Here are some short excerpts of what you’ll find.

The way interactive planning is carried out depends on three operating principles: the participative principle, the principle of continuity, and the holistic principle.

The Participative Principle

Most planners and consumers of plans believe that the principal benefit of planning comes from use of its product, a plan. The interactivist denies this. He asserts that in planning, process is the most important product. Therefore the principal benefit of it derives from engaging in it. [….]

The Principle of Continuity

Most planning is done discontinuously. […] A designated plan of each year, usually months, are used for preparing the plan. [….]

Because of events that are not and could not be foreseen, no plan, however carefully it is prepared, works as expected. Therefore, the effects expected from implementing plans and the assumptions on which these expectations are based should be continuously reviewed. [….]

The Holistic Principles

This principle has two parts: the principle of coordination and the principle of integration. Each has to do with a different dimension of organization. […]

The principle of coordination states that no part of an organization can be planned for effectively if it is planned for independently of any other unit at the same time. There, all units at the same level should be planned for simultaneously and independently. […]

The principle of integration states that planning done independently at any level of a system cannot be as effective as planning carried out independently at all levels. It is common knowledge, for example, that a policy or practice established at one level of a corporation often creates problems at other levels. Therefore the solution to a problem that appears at one level may best be obtained by changing a policy or practice at another [Ackoff 1981, p. 65-73].

There’s no question that Ackoff is thorough in his conception of Interactive Planning. The approach follows the traditions (concurrently emerging) from the Tavistock Institute for Human Relations, in The Socio-Psychological Perspective, The Socio-Technical Systems Perspective, and The Socio-Ecological Systems Perspective.

3. Doing or not-doing raises question of (i) changes via systems of willful action, and/or (ii) changes via systems of non-intrusive action

Challenging Ackoff’s criticism on inactivists (as satisficing) versus interactivists (as idealizing, wanting to do better in the future than we are capable of doing now), lead us to resolving, solving or dissolving problems.

The selection of a means to fill a planning gap is a planning problem. Like any other problem, those that arise in planning can be treated in three ways: they can be resolved, solved or dissolved.

To resolve a problem is to select a means that yields an outcome that is good enough, that satisfices. I call this approach clinical because it relies heavily on past experience and current trial and error for its inputs. It is qualitatively, not quantitatively, oriented; it is rooted deeply in common sense; and it makes extensive use of subjective judgements.

Most managers are problem resolvers. [….] Reactive planners tend to be problem resolvers.

To solve a problem is to select a means that is believed to yield the best possible outcome, that optimizes. I call this the research approach, because it is largely based on scientific methods, techniques and tools. It makes extensive use of mathematical models and real or simulated experiements; therefore, it relies heavily on observation and measurement, both of which it aspires to carry out as objectively as possible. [….]

The research approach is used most heavily by management scientists and technologically oriented managers, who principle organizational objective tends to be thrival rather than mere survival. They are growth seekers. […]

To dissolve a problem is to change the nature of either the entity that has it or alter its environment in order to remove the problem. Problem dissolvers idealize rather than satisfice or optimize because their objective is to change the system involved, or its environment, to bring it closer to an ultimately desired state, one in which the problem cannot or does not arise. I call this the design approach.

Designers make use of the methods, techniques and tools of both clinicians and researchers, and much more; but they use them synthetically, rather than analytically. They try to dissolve problems by changing the characteristics of the system that contains the part with the problem; they look for dissolutions in the containing whole rather than for solutions in the contained parts.

The design approach is used by the minority of managers and management scientists whose principle organizational objective is development rather than growth or survival. Interactive planners tend to be problem dissolvers. [Ackoff 1981, p. 170-172, editorial paragraphing added].

Ackoff led the Social Systems Science program at the University of Pennsylvania. Interactive Planning was thus focused on designing social systems. Beyond just human nature, did Ackoff write about the natural world? In a 1996 paper on “Reflections on Systems and their Models” (traced in a “System types as purposeful, and displaying choice” | 2015), nature shows up.

| Table 1: Types of systems | |||

|---|---|---|---|

| Parts | Whole | Example | |

| Deterministic | No choice | No choice | Clock |

| Ecological | Choice | No choice | Nature |

| Animate | No choice | Choice | Person |

| Social | Choice | Choice | Corporation |

Thus, attempting to intervene in ecological systems (as defined by Ackoff) by dissolving a problem in nature — where the whole doesn’t have choice — means working through a mess (i.e. a problematique, where “a set of two or more independent problems constitute a system” [Ackoff 1981, p. 52]. We would ideally like to dissolve the mess, which is beyond solving or resolving a problem. Are ecological systems then beyond interactivists?

Let’s return to Ackoff’s description of inactivists, and how they fit into the systems movement.

Recall that inactivists focus on not doing things that should not be done (avoiding errors of commission) and preactivists on doing things that should be done (avoiding errors of omission). Inactivists try to avoid both but are generally more concerned with two other types of error that are generally ignored: asking the wrong questions or solving the wrong problems and not asking or solving the right ones. They believe we fail more often because of an inability to face the right problems than because of an inability to solve the problems we face [Ackoff 1981, p. 63, editorial paragraphing added].

Thus, beyond errors of commission and errors omission, there are more types of errors! Ian Mitroff has written about these.

| Table 2: Types of errors | ||

|---|---|---|

| Type 1 error | False positive | Finding a (statistical) relation that isn’t real |

| Type 2 error | False negative | Missing a (statistical) relation that is real |

| Type 3 error | Tricking ourselves | Unintentional error of solving the wrong problems precisely (through ignorance, faulty education or unreflective practice) |

| Type 4 error | Tricking others | Intentions error of solving wrong problems (through malice, ideology, overzealousness, self-righteousness, wrongdoing) |

The basic ideas behind the Type One and Type Two Errors are easy to grasp. Suppose one is interested in testing whether a new drug is better than an old one a treating headaches. In the process of giving the new drug and old drug to two evenly matched groups … two errors can be made.

First, one can conclude wrongly that the new drug is better than the old one when actually the old one is better or equal to the new one. This is known as the Type One Error, or E1. E1 is akin to saying that there’s a meaningful difference between the two drugs when there is not.

Second, one can also conclude wrongly that the old drug is better than the new one when in fact the new one is better. This is known as Type Two Error, or E2. E2 is akin to saying that there is not a meaningful difference between the drugs when there is. […]

The Type Three Error is the unintentional error of solving the wrong problems precisely.

[The] Type 4 error is the intentional error of solving the wrong problems. […]

The Type Three Error is primarily the result of ignorance, a narrow and fault education, and unreflective practice.

In contrast, the Type Four Error is the result of deliberate malice, narrow ideology, overzealousness, a sense of self-righteousness, and wrongdoing. … [Every] Type Four Error is invariably political or has strong political elements … [Mitroff and Silvers 2010, pp. 3-5]

These descriptions of errors are still associated with human social systems. We would like to think that human beings can learn from errors. Beyond human beings, Gregory Bateson suggests Learning IV, which is an evolutionary process that combines phylogenesis with ontogenesis.

Escaping the paradigm of American pragmatism, means going to an alternative philosophy. Keekok Lee suggests contextual dyadic thinking as a foundation to classical Chinese medicine, as a path different from dualistic thinking in modern Western philosophy. In that philosophy, doing and not doing by individuals and/or organizations may be related to systems changes via willful action and/or non-intrusive action, as translations of wéi and wú wéi (i.e. 為 and 無為; or 为 and 无为) towards science.

Appendix. Doing or not-doing in management can be placed philosophically in American pragmatism

From Ackoff, commission/omission and doing/not-doing derives from a school of American pragmatism. Ackoff was only 6 years younger than his Ph.D. supervisor, West Churchman, who guided him on this path.

Ackoff was more successful than Churchman in turning his action-orientated philosophizing based on Singerian pragmatism into practical methods that could be applied by practitioners. He argued that objectivity through modelling was impossible; objectivity can only be approached by groups of individuals with diverse values. His approach ‘interactive planning’ involves gaining the participation of stakeholders in the design of desirable futures and bringing them about. His work has had a major impact on the OR and systems communities, particularly in the UK.

Jackson (2000) observes that Ackoff’s approach has also been criticized for its ‘subjectivism’ and its ‘idealism’. Further, he says that Ackoff is accused of not giving serious attention to deep-seated conflict and coercion and of relying too much on participation as a remedy for organizational problems. He is also accused of artificially limiting the scope of his projects so as not to challenge his client’s or sponsors fundamental interests. Ackoff believes his critics are obsessed with the notion of irresolvable conflicts. He points out that he has not encountered such conflicts in more than 300 projects (pp 243–246). [Ormerod 2006, p. 905]

All the conflicts [Ackoff] has met, he has been able to address with the interactive planning approach. He suspects that his critics merely assert that such conflicts exist; if they went out and tried to use interactive planning on conflicts they see as irresolvable, they might find out differently.

With regard to participation, Ackoff (1975) accepts that it might meet with some resistance from powerful stakeholders. But there are ways around this, such as by introducing stakeholders first as consultants and then gradually increasing their role. [….] Better incremental change, Ackoff argues, than waiting for some judgment day when all wrongs will be corrected. [….] The chief obstruction between people and the future they most desire is the people themselves and their limited ability to think creatively and imaginatively. Provide people with a mission, with a mobilizing idea, and the constrains on their and their organization’s development will largely disappear.

In the exchanges between Ackoff and his critics we are witnessing a war between sociological paradigms …. [Jackson 2000, p. 246]

The philosophy flows to Ackoff, via West Churchman, from his doctoral supervisor, Edgar A. Singer. Jr.

Singer was a student of William James, who regarded him as “the best all-round man for philosophic business whom we have had among our students in the third years during which I have given instruction in philosophy here” at Harvard University. James admired in Singer the completeness with which he dealt with all facets of philosophy, and they shared a concern for humanity and the pragmatic conditions under which people must make a life for themselves. They both sought progress. [….]

Singer viewed progress as a visionary might, not as a historian. Progress is going some of the “toilsome way toward the conquest we dream of” (1936, p. 89). It is in closing the gap between where you are and where you desired to be which is not necessarily how far you have traveled from whence you started. his measure of performance is clear. “The measure of man’s cooperation with man in the conquest of nature measures progress” (1936, p. 89)

This statement summarizes Singer’s values. “Cooperation” is the operative word in this description of progress, for Singer’s concept of cooperation is quite inclusive. “Conquest”, I believe, is used in the classical sense of its Latin roots com and quaerere, which denote getting what one seeks. As I will discuss later, the accent is on acquiring the knowledge necessary to achieve one’s goals, not on exploiting nature.

A progressive is one who has sought to live life by these values. It is life’s highest calling. How and why the progressive’s life is to be lived is the task of Singer’s philosophy. it begins with a metaphysical assumption about people and their place in the universe. [….]

There is something that keeps propelling you as a group of points through space. Singer called this the “pulse,” indeed he referred to this as “the pulse of life”. […]

You, as a spaceship in the universe, have these same properties. You are a group of points. The roup is the nature of a pulse, and the pulse is defined by its purpose, that is, it is teleological. This means that to some extend you are the captain of your own spaceship, that you make choices, and that you have a free will.

Are these choices without limit? No, but this partly a function of your mind. If, as I guide my spaceship, I can accomplish my purpose in n situations or “states of the world” the university may present me with, then n defines the limits of my choice and the capacity of my mind. Suppose someone else can respond effectively in n + 1 situations. That person then has a broader capacity of mind and hence is better equipped to deal with the world than am I. Furthermore, if in the course of my travels the world can face me with m situations, then my “requisite variety” for success, to use Ross Ashby’s term, is that I possess a repertoire of at least m responses.

It is worthwhile to note here that Singer with this conceptualization has broken through one of philosophy’s classic impasses. The universe is mechanistic, yet the individual has a mind and a free will. The two coexist in the same world. There are some things in the university that function as Aristotle contended. You and I think using concepts. We can reason and have intentions and purposes. At the same time, everything in the universe is mechanical, as Democritus contended, and has an independent structure to it. [Mason, 1992, pp. 32-33 ]

Ackoff and Churchman were directly involved in the operations research movement [Churchman, Ackoff and Arnoff 1957]. Churchman was a mathematical statistician in the U.S. Army during WWII, and instrumental in spinning off a group from the Operations Research Society of America (ORSA) to launch The Institute of Management Sciences (TIMS) [Mason and Mitroff 2015]. These organizations merged in 1995 to become INFORMS.

References

Ackoff, Russell L. 1981. “Our Changing Concept of Planning.” In Creating the Corporate Future: Plan or Be Planned For, 51–76. New York: John Wiley and Sons.

Ackoff, Russell L. 1994. “It’s a Mistake!” Systems Practice 7 (1): 3–7. https://doi.org/10.1007/BF02169161.

Ackoff, Russell L. 2006. “Why Few Organizations Adopt Systems Thinking.” Systems Research and Behavioral Science 23 (5): 705–708. https://doi.org/10.1002/sres.791.

Ackoff, Russell L., and Jamshid Gharajedaghi. 1996. “Reflections on Systems and Their Models.” Systems Research 13 (1): 13–23. https://doi.org/10.1002/(SICI)1099-1735(199603)13:1<13::AID-SRES66>3.0.CO;2-O.

Churchman, C. West, Russell L. Ackoff, and E. Leonard Arnoff. 1957. Introduction to Operations Research. New York: John Wiley & Sons. https://archive.org/details/introductiontoo00chur.

Ing, David, Minna Takala, and Ian Simmonds. 2003. “Anticipating Organizational Competences for Development through the Disclosing of Ignorance”, Proceedings of the 47th Annual Meeting of the International Society for the System Sciences, at Hersonissos, Crete, July 7-11, 2003.

Jackson, Michael C. 2000. Systems Approaches to Management. New York: Kluwer.

Mason, Richard O. 1992. “The Apostle of Progress.” In Planning for Human Systems: Essays in Honor of Russell L. Ackoff, edited by Jean-Marc Choukroun and Roberta M. Snow, 31–48. Philadelphia: Busch Center, Wharton School of the University of Pennsylvania.

Mason, Richard O., and Ian I. Mitroff. 2015. “Charles West Churchman — Philosopher of Management: An Interview With Richard O. Mason Conducted by Ian I. Mitroff.” Journal of Management Inquiry 24 (1): 37–46. https://doi.org/10.1177/1056492614537708.

Mitroff, Ian I., and Abraham Silvers. 2010. Dirty Rotten Strategies: How We Trick Ourselves and Others into Solving the Wrong Problems Precisely. Stanford University Press.

Ormerod, Richard. 2006. “The History and Ideas of Pragmatism.” The Journal of the Operational Research Society 57 (8): 892–909. https://doi.org/10.1057/palgrave.jors.2602065.

Susman, Gerald I., and Roger D. Evered. 1978. “An Assessment of the Scientific Merits of Action Research.” Administrative Science Quarterly 23 (4): 582–603. https://doi.org/10.2307/2392581.

van ’t Hof, Sjon. 2015. “Summarizing ‘Why Few Organizations Adopt Systems Thinking.’” CSL4D/SystemicAgency (blog). April 11, 2015. https://csl4d.wordpress.com/2015/04/11/abstracts-and-concept-maps-compared/.